2023-10

Automated Service Ticket Analysis System

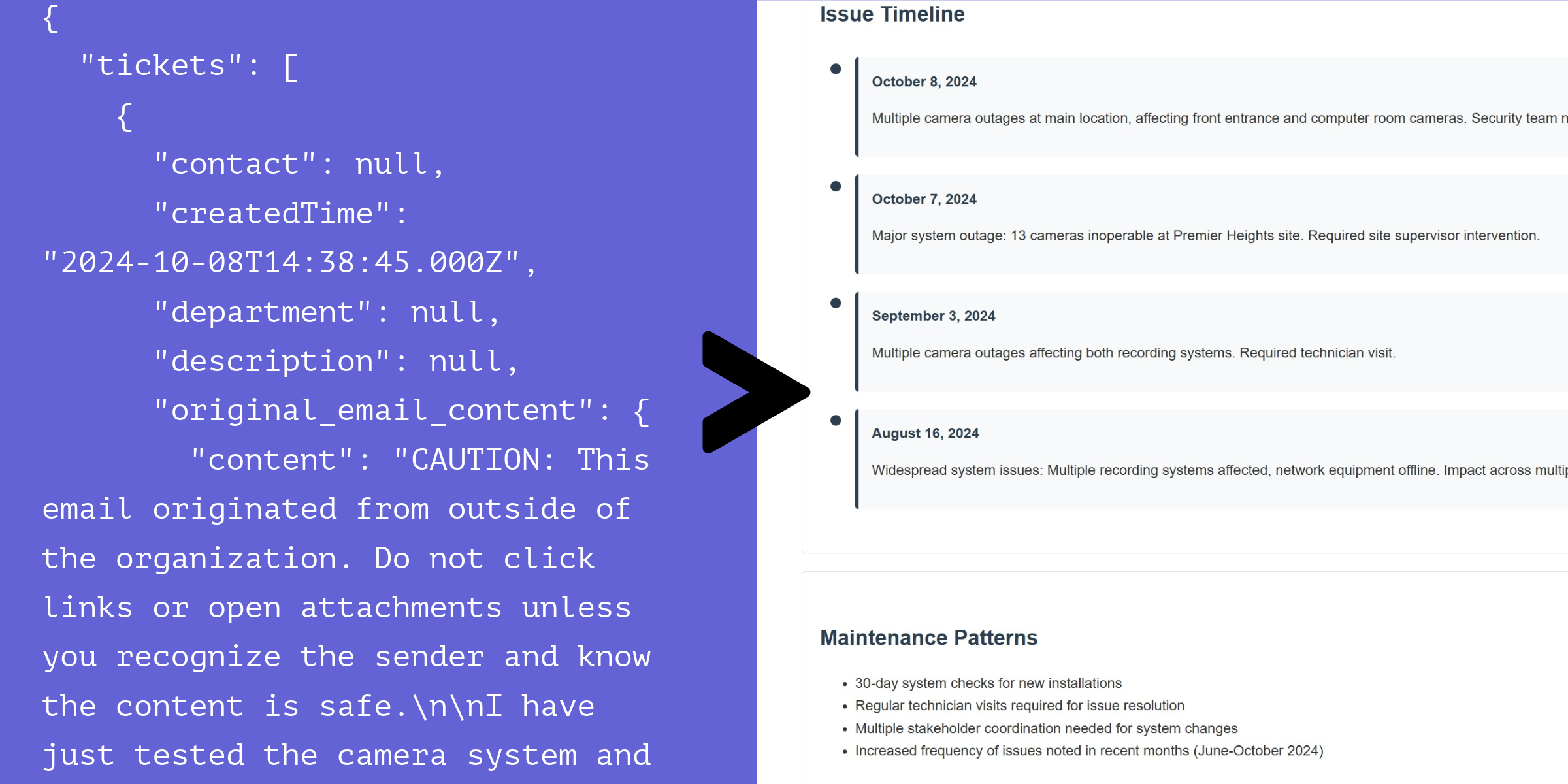

Turning maintenance records into structured, actionable data

Overview

Manual review of service tickets is slow, inconsistent, and doesn't scale. At a physical security company managing hundreds of maintenance records per month, technicians were filling out free-form tickets with no standard format — making pattern recognition and trend analysis nearly impossible.

I built an automated analysis system that uses Llama 3.2 to parse unstructured maintenance tickets and extract structured, consistent data. The result: a system that ingests a raw ticket and outputs a clean JSON object containing the issue date, affected equipment, fault classification, and severity — regardless of how the original technician wrote it.

Technical Approach

The core challenge wasn't the LLM call itself — it was getting reliable, consistent output across wildly different ticket formats. A ticket might say "door controller #4 acting up again" or it might have a paragraph of notes with an embedded date and part numbers. Both need to produce the same schema.

Two-stage extraction pipeline

I used an iterative approach: Stage 1 focuses on high-fidelity extraction with no strict formatting requirements. Stage 2 takes that output and re-structures it into the target JSON schema. This separation dramatically reduced hallucination compared to asking for structured JSON directly.

def process_with_llama(ticket, max_retries=5, initial_wait=1):

client = llama.Client()

prompt = f"""

You are an AI assistant analyzing a customer support ticket.

Extract key information including: date, main issue, affected equipment,

and ticket classification.

Guidelines:

- Answer only from the ticket content provided

- If information is unavailable, mark it as null

- Prioritize fidelity over formatting in this stage

- Include all relevant details even if they don't fit standard categories

Ticket content:

{json.dumps(ticket, indent=2)}

"""Resilient API integration

Llama API calls at volume require intelligent retry handling. I implemented exponential backoff with jitter and tracked per-request rate limit consumption to avoid throttling:

for attempt in range(max_retries):

try:

response = client.chat.completions.create(

model="llama3.2",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

except RateLimitError:

wait = initial_wait * (2 ** attempt) + random.uniform(0, 1)

time.sleep(wait)Flexible JSON schema

The output schema was designed to be lenient — required fields like date and issue_summary are always present, but an additional_details bag captures anything the model found that didn't fit the predefined categories.

Business Impact

- Before: Technicians manually reviewed tickets for patterns. This took hours per week and only happened retrospectively.

- After: Every ticket is auto-tagged on ingestion. Management can query "all motor failure tickets in Q3" in seconds.

Automated trend analysis surfaced a recurring door controller issue that had been written off as one-offs — catching it earlier than any manual review would have.

What I'd Do Differently

Running two LLM passes per ticket adds latency and cost. With more training data, a fine-tuned extraction model would be faster and cheaper than chaining two general-purpose calls.