2026-02

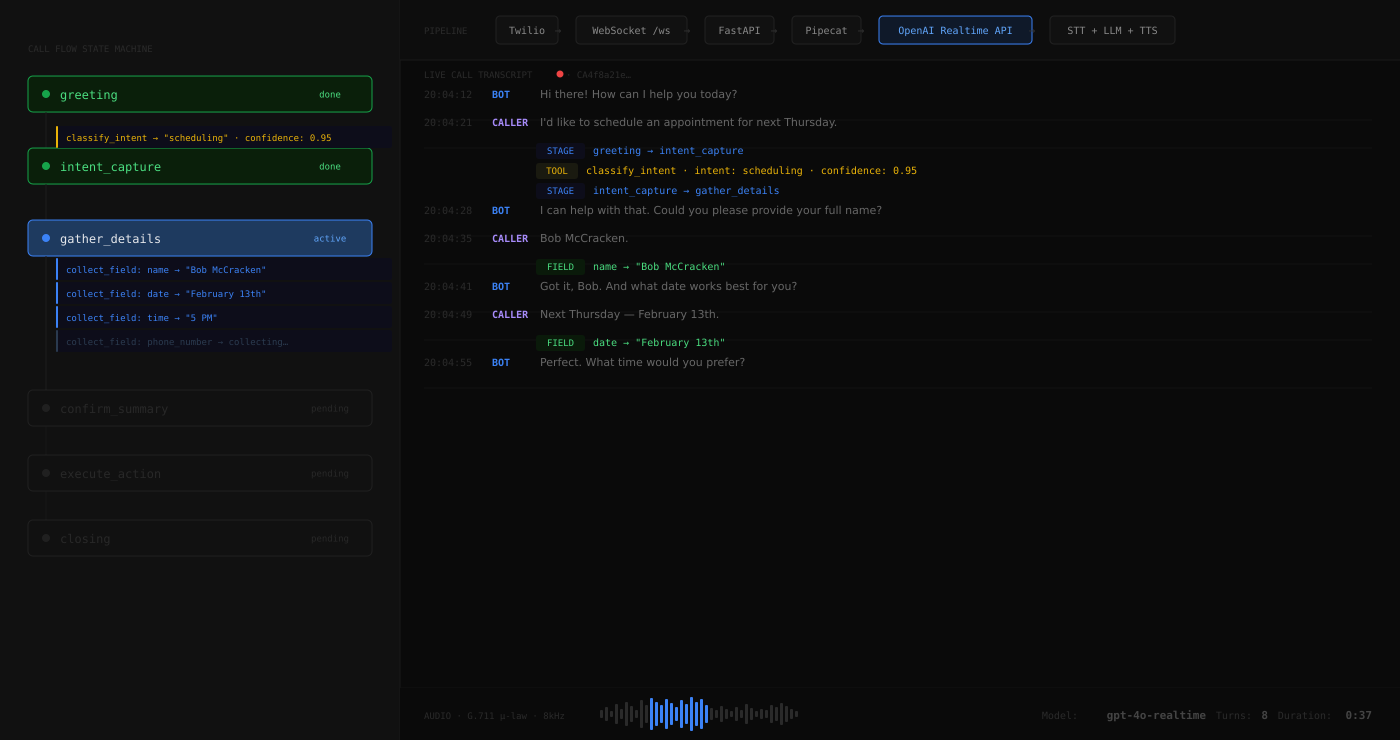

OpenAI Realtime Voice Bot

A production phone agent that handles inbound calls with a finite state machine

Overview

Most voice bot demos answer a question and hang up. This one handles a full call flow — greeting, intent classification, multi-field data collection, confirmation, correction, execution, and goodbye — entirely through voice, without a human in the loop.

The bot runs on Twilio, uses the OpenAI Realtime API for speech-to-text, LLM reasoning, and text-to-speech in a single WebSocket connection, and enforces a finite state machine so the conversation always moves forward in a predictable way. Every call produces a structured audit log.

Architecture

Twilio Call

│

▼

WebSocket (/ws)

│

▼

FastAPI Server

│

▼

Pipecat Pipeline:

transport.input()

→ CallFlowStateTracker ← state machine + tool handlers

→ LLMUserAggregator

→ OpenAIRealtimeLLMService ← STT + LLM + TTS in one WebSocket

→ transport.output()

→ LLMAssistantAggregator

→ TranscriptFinalizerThe OpenAI Realtime API handles speech-to-text, inference, and text-to-speech in one connection — no pipeline of separate services. Audio arrives as G.711 u-law (Twilio's native format), with no resampling needed. Server-side VAD handles turn detection.

Each call gets a fully isolated pipeline instance — no shared mutable state between concurrent calls.

Call Flow State Machine

greeting

│

▼

intent_capture ◄────────────────────────────┐

│ │

├─ classify_intent (confidence ≥ 0.7) │

│ ├─ known intent ──► gather_details │

│ ├─ general_inquiry ──► quick_answer │

│ └─ unknown ──► escalate │

│ │

gather_details ──► confirm_summary ───────────┤

│ │

(confirmed) │

│ │

execute_action │

│ │

closing ── "anything else?" ┘The machine has eight stages. Each stage defines which tools the LLM is allowed to call. Tools are the only way state transitions happen — the LLM cannot jump stages by producing text.

Keeping the LLM on the Rails

Getting an LLM to reliably operate a state machine over a voice call requires more than a good system prompt. Three enforcement layers work together:

Directive prompts. Each stage uses explicit MUST/NEVER language and numbered rules. The LLM is told exactly which tool to call and when, not what the tool is for in general terms.

Turn-count watchdog. The CallFlowStateTracker tracks consecutive user turns without a tool call. After two turns in a tool-required stage (intent_capture, gather_details), it injects a system message forcing the expected tool call.

Parameter validation. Tool handlers use state-of-record values rather than trusting LLM arguments. execute_action reads state.intent, not the LLM's action_type parameter — the LLM cannot corrupt state by hallucinating a field value.

Audit Logging

Every call produces two files in logs/:

JSONL — one event per line, machine-parseable:

{"ts":"...","event":"stage_transition","data":{"from":"greeting","to":"intent_capture"}}

{"ts":"...","event":"tool_call","data":{"tool":"classify_intent","arguments":{...}}}

{"ts":"...","event":"call_end","data":{"intent":"scheduling","duration_s":125.3,"turns":14}}TXT transcript — human-readable with inline stage transitions and tool calls, useful for reviewing a call without replaying it.

Test Coverage

The state machine is unit-tested independently of the LLM. 40 assertions across 7 scenarios run without API keys:

- Happy path — scheduling with 5 required fields

- Low confidence intent classification

- Correction loop — confirm → correct → re-confirm

- Quick answer — general inquiry

- Escalation — unknown intent

- Field clarification

- "Anything else?" loop

python test_flow.py

# 40 tests, 7 scenarios — no API keys requiredDesign Decisions

State machine over prompt engineering. A well-crafted prompt might handle 80% of calls correctly. A state machine with prompt enforcement handles the other 20% — the callers who give unclear answers, loop back, or go silent.

Tool calls as the only state transition mechanism. If the LLM produces text without calling a tool in a tool-required stage, the watchdog detects it and forces the call. This prevents the model from drifting forward by narrating what it would do.

Per-call isolation. Every call gets its own CallState, LLMContext, and logger. Concurrent calls cannot affect each other.

Audit trail by default. Every tool call, stage transition, and utterance is logged in both machine and human-readable formats. Useful for debugging, compliance, and replaying edge cases.